Is Anthropic Operating More Ethically than OpenAI?

The AI companies that court government power and the one that refuses — and what history says about the difference.

The question of corporate ethics in artificial intelligence has crystallized around two companies that emerged from the same intellectual milieu yet have chosen markedly different paths. Anthropic, founded in 2021 by former OpenAI researchers led by Dario Amodei, positioned itself explicitly as a safety-focused alternative to its predecessor. OpenAI, despite its original nonprofit charter and safety rhetoric, has increasingly embraced commercial and governmental partnerships that reveal a more pragmatic approach to power.

Both companies emerged from concerns about AI alignment and safety, but their founding narratives diverge sharply. Dario Amodei, a former OpenAI research director with a PhD in computational neuroscience, left with his sister Daniela and several colleagues amid growing unease about OpenAI's trajectory under Sam Altman. The Amodeis had watched Altman, a former Y Combinator president with no technical background, transform OpenAI from a research lab into a Microsoft-partnered entity increasingly focused on rapid deployment rather than careful safety research. Anthropic's Constitutional AI approach represents not just a technical methodology but a philosophical statement about building AI systems with explicit ethical constraints.

The clearest test of these competing philosophies has emerged in government partnerships. Anthropic has consistently refused lucrative Pentagon contracts and resisted pressure to work with defense agencies, even when such refusal invited regulatory scrutiny. The company's leadership has stated that military applications fundamentally conflict with their safety mission. This stance carries real costs — defense contracts represent some of the most stable and well-funded opportunities in the AI sector.

OpenAI under Altman has taken the opposite approach. The company has actively courted government partnerships and has signaled openness to working with the incoming Trump administration. This represents a particularly stark choice given the Trump administration's documented enthusiasm for deploying surveillance technology against American citizens, from facial recognition systems to social media monitoring programs targeting protesters and immigrants. Altman's public statements suggest he views government partnership as inevitable and necessary for maintaining American AI leadership, but this framing obscures the specific nature of the partnerships being contemplated.

The contrast becomes sharper when examining the broader ecosystem of AI-government collaboration. Palantir, the data analytics company founded by Peter Thiel, offers a cautionary example of how AI capabilities become embedded in state surveillance apparatus. Palantir's systems have been used for immigration enforcement, predictive policing, and battlefield intelligence — applications that demonstrate how quickly analytical tools become instruments of control. The company's success has made it a template that other AI companies study, creating pressure to embrace similar partnerships regardless of ethical considerations.

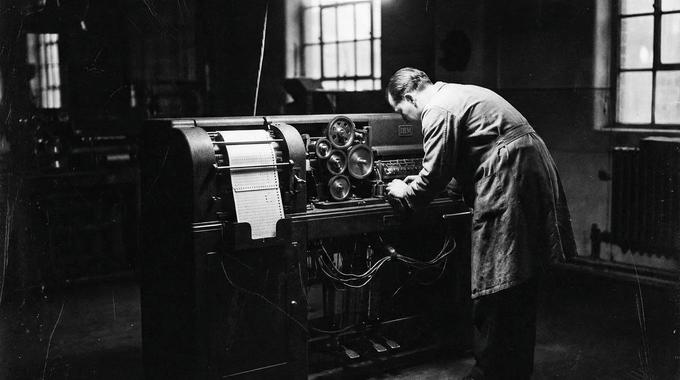

Altman's willingness to engage with authoritarian-leaning governance structures reflects a broader Silicon Valley tradition of accommodation with power. This tradition has deep historical precedents that extend far beyond technology. During the 1930s, IBM provided punch card systems that enabled Nazi Germany's census operations and concentration camp administration. The company's German subsidiary, Dehomag, customized tabulation machines specifically for tracking Jewish populations and managing deportations. IBM leadership knew these systems' applications but prioritized market access over moral considerations.

Similar patterns emerged across industries during this period. Ford Motor Company operated plants in Nazi Germany throughout the war, using forced labor and producing military vehicles for the Wehrmacht. Hugo Boss manufactured SS uniforms. These weren't merely cases of being trapped by circumstances — they represented active choices to maintain profitable relationships with authoritarian regimes even as those regimes' intentions became unmistakable.

The pharmaceutical industry offers another instructive parallel. IG Farben, the German chemical conglomerate, didn't simply supply materials to Nazi camps — it actively participated in human experimentation and developed the Zyklon B gas used in extermination chambers. Company executives sat on concentration camp boards and used prisoners as test subjects for experimental drugs. The complicity wasn't passive; it was structural and profitable.

What distinguishes these historical cases from contemporary AI partnerships isn't the scale of immediate harm — current American surveillance systems haven't reached genocidal extremes. The distinction lies in the precedent being established and the trajectory being normalized. When AI companies integrate their systems with governmental surveillance apparatus, they create infrastructure that can be redirected toward more severe applications as political circumstances evolve.

The technical capabilities being developed today far exceed what previous authoritarian regimes could deploy. Facial recognition systems can identify individuals in crowds with unprecedented accuracy. Natural language processing can analyze communications at massive scale. Predictive algorithms can identify potential dissidents before they act. These tools, once embedded in governmental systems, become extremely difficult to remove or constrain.

Anthropic's refusal to participate in this ecosystem represents more than corporate virtue signaling. It reflects an understanding that certain technological capabilities, once deployed in authoritarian contexts, cannot be recalled. The historical record is unambiguous on this point: companies that accommodated rising authoritarianism in exchange for market access did not moderate those regimes. They enabled them.

The choice facing AI companies today is not between idealism and pragmatism. It is between two different understandings of what pragmatism means. Altman's version treats current political arrangements as stable and optimizes for position within them. The Amodei version treats those arrangements as contingent and optimizes for the world that might exist in ten years. One of these bets will prove correct. The historical evidence suggests it will not be the one placing its chips on the permanence of power.